2 - Neural machine translation with attention¶

- If you had to translate a book's paragraph from French to English, you would not read the whole paragraph, then close the book and translate.

- Even during the translation process, you would read/re-read and focus on the parts of the French paragraph corresponding to the parts of the English you are writing down.

- The attention mechanism tells a Neural Machine Translation model where it should pay attention to at any step.

2.1 - Attention mechanism¶

In this part, you will implement the attention mechanism presented in the lecture videos.

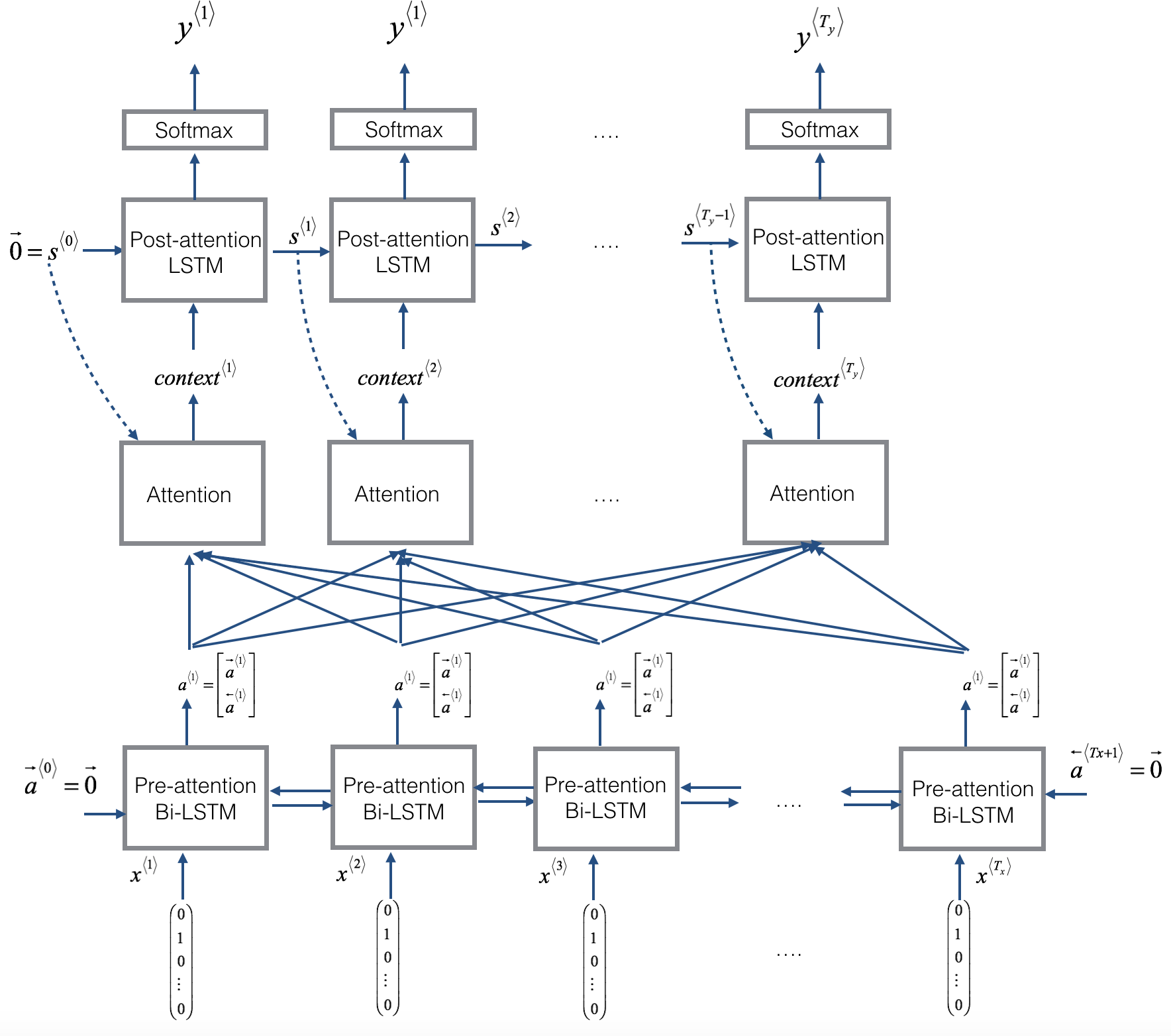

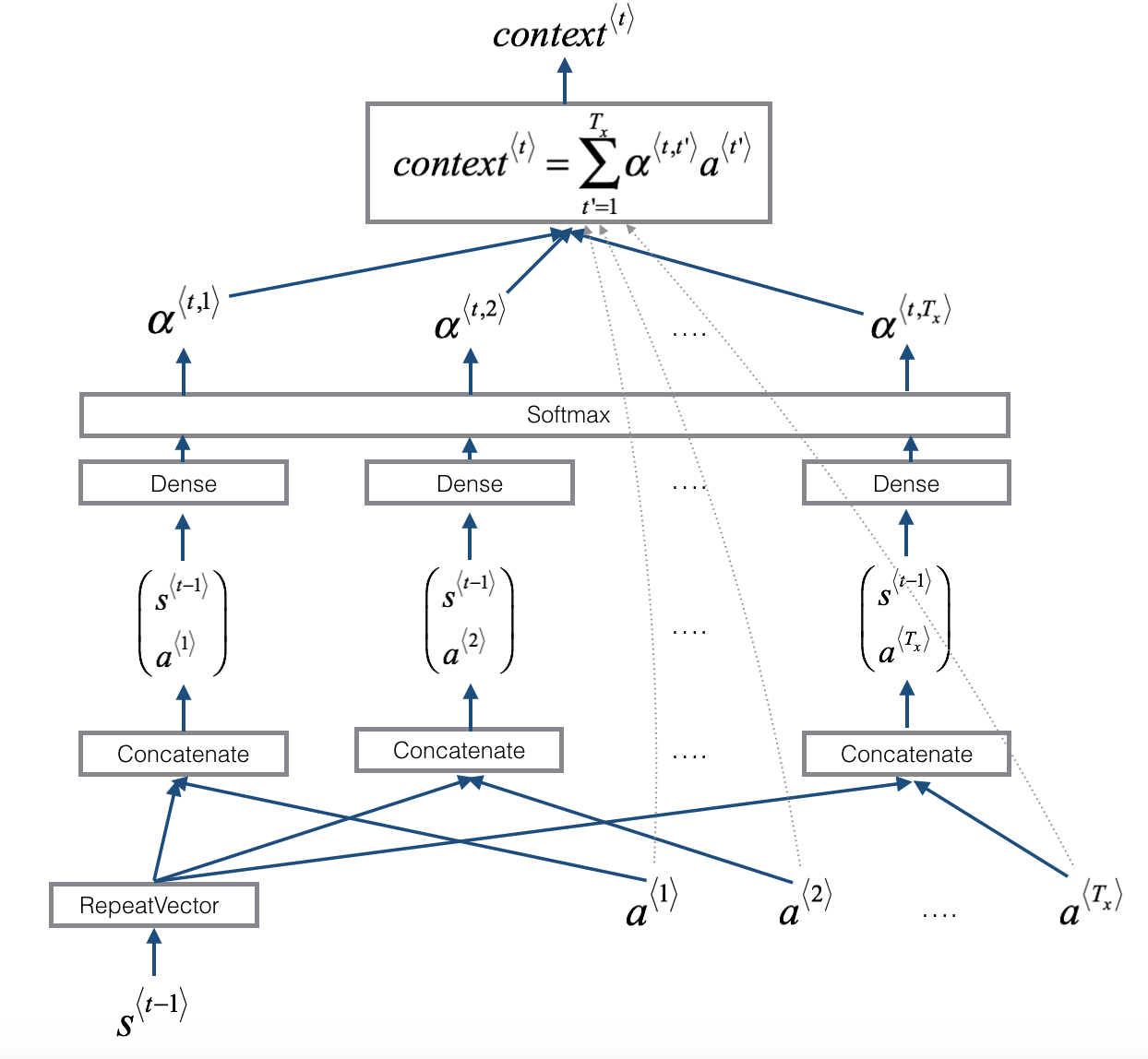

- Here is a figure to remind you how the model works.

- The diagram on the left shows the attention model.

- The diagram on the right shows what one "attention" step does to calculate the attention variables $\alpha^{\langle t, t' \rangle}$.

- The attention variables $\alpha^{\langle t, t' \rangle}$ are used to compute the context variable $context^{\langle t \rangle}$ for each timestep in the output ($t=1, \ldots, T_y$).

|

|

</table>

| **Total params:** | 52,960 |

| **Trainable params:** | 52,960 |

| **Non-trainable params:** | 0 |

| **bidirectional_1's output shape ** | (None, 30, 64) |

| **repeat_vector_1's output shape ** | (None, 30, 64) |

| **concatenate_1's output shape ** | (None, 30, 128) |

| **attention_weights's output shape ** | (None, 30, 1) |

| **dot_1's output shape ** | (None, 1, 64) |

| **dense_3's output shape ** | (None, 11) |

Compile the model¶

- After creating your model in Keras, you need to compile it and define the loss function, optimizer and metrics you want to use.

Sample code

optimizer = Adam(lr=..., beta_1=..., beta_2=..., decay=...)

model.compile(optimizer=..., loss=..., metrics=[...])

### START CODE HERE ### (≈2 lines)

opt = Adam(lr = 0.005, beta_1 = 0.9, beta_2 = 0.999, decay = 0.01)

model.compile(optimizer = opt, loss = "categorical_crossentropy", metrics = ['accuracy'])

### END CODE HERE ###

Define inputs and outputs, and fit the model¶

The last step is to define all your inputs and outputs to fit the model:

- You have input X of shape $(m = 10000, T_x = 30)$ containing the training examples.

- You need to create

s0andc0to initialize yourpost_attention_LSTM_cellwith zeros. - Given the

model()you coded, you need the "outputs" to be a list of 10 elements of shape (m, T_y).- The list

outputs[i][0], ..., outputs[i][Ty]represents the true labels (characters) corresponding to the $i^{th}$ training example (X[i]). outputs[i][j]is the true label of the $j^{th}$ character in the $i^{th}$ training example.

- The list

s0 = np.zeros((m, n_s))

c0 = np.zeros((m, n_s))

outputs = list(Yoh.swapaxes(0,1))

Let's now fit the model and run it for one epoch.

model.fit([Xoh, s0, c0], outputs, epochs=1, batch_size=100)

Epoch 1/1 10000/10000 [==============================] - 54s - loss: 17.1519 - dense_6_loss_1: 1.3138 - dense_6_loss_2: 1.0667 - dense_6_loss_3: 1.7901 - dense_6_loss_4: 2.7081 - dense_6_loss_5: 0.8430 - dense_6_loss_6: 1.3397 - dense_6_loss_7: 2.7386 - dense_6_loss_8: 0.9896 - dense_6_loss_9: 1.7369 - dense_6_loss_10: 2.6255 - dense_6_acc_1: 0.4573 - dense_6_acc_2: 0.6558 - dense_6_acc_3: 0.2793 - dense_6_acc_4: 0.0701 - dense_6_acc_5: 0.9564 - dense_6_acc_6: 0.2614 - dense_6_acc_7: 0.0399 - dense_6_acc_8: 0.9690 - dense_6_acc_9: 0.2063 - dense_6_acc_10: 0.0897

<keras.callbacks.History at 0x7f43d3cca940>

While training you can see the loss as well as the accuracy on each of the 10 positions of the output. The table below gives you an example of what the accuracies could be if the batch had 2 examples:

We have run this model for longer, and saved the weights. Run the next cell to load our weights. (By training a model for several minutes, you should be able to obtain a model of similar accuracy, but loading our model will save you time.)

model.load_weights('models/model.h5')

You can now see the results on new examples.

EXAMPLES = ['3 May 1979', '5 April 09', '21th of August 2016', 'Tue 10 Jul 2007', 'Saturday May 9 2018', 'March 3 2001', 'March 3rd 2001', '1 March 2001']

for example in EXAMPLES:

source = string_to_int(example, Tx, human_vocab)

source = np.array(list(map(lambda x: to_categorical(x, num_classes=len(human_vocab)), source))).swapaxes(0,1)

prediction = model.predict([source, s0, c0])

prediction = np.argmax(prediction, axis = -1)

output = [inv_machine_vocab[int(i)] for i in prediction]

print("source:", example)

print("output:", ''.join(output),"\n")

source: 3 May 1979 output: 1979-05-03 source: 5 April 09 output: 2009-05-05 source: 21th of August 2016 output: 2016-08-21 source: Tue 10 Jul 2007 output: 2007-07-10 source: Saturday May 9 2018 output: 2018-05-09 source: March 3 2001 output: 2001-03-03 source: March 3rd 2001 output: 2001-03-03 source: 1 March 2001 output: 2001-03-01

You can also change these examples to test with your own examples. The next part will give you a better sense of what the attention mechanism is doing--i.e., what part of the input the network is paying attention to when generating a particular output character.

3 - Visualizing Attention (Optional / Ungraded)¶

Since the problem has a fixed output length of 10, it is also possible to carry out this task using 10 different softmax units to generate the 10 characters of the output. But one advantage of the attention model is that each part of the output (such as the month) knows it needs to depend only on a small part of the input (the characters in the input giving the month). We can visualize what each part of the output is looking at which part of the input.

Consider the task of translating "Saturday 9 May 2018" to "2018-05-09". If we visualize the computed $\alpha^{\langle t, t' \rangle}$ we get this:

Notice how the output ignores the "Saturday" portion of the input. None of the output timesteps are paying much attention to that portion of the input. We also see that 9 has been translated as 09 and May has been correctly translated into 05, with the output paying attention to the parts of the input it needs to to make the translation. The year mostly requires it to pay attention to the input's "18" in order to generate "2018."

3.1 - Getting the attention weights from the network¶

Lets now visualize the attention values in your network. We'll propagate an example through the network, then visualize the values of $\alpha^{\langle t, t' \rangle}$.

To figure out where the attention values are located, let's start by printing a summary of the model .

model.summary()

____________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

====================================================================================================

input_1 (InputLayer) (None, 30, 37) 0

____________________________________________________________________________________________________

s0 (InputLayer) (None, 64) 0

____________________________________________________________________________________________________

bidirectional_1 (Bidirectional) (None, 30, 64) 17920 input_1[0][0]

____________________________________________________________________________________________________

repeat_vector_2 (RepeatVector) (None, 30, 64) 0 s0[0][0]

lstm_2[0][0]

lstm_2[1][0]

lstm_2[2][0]

lstm_2[3][0]

lstm_2[4][0]

lstm_2[5][0]

lstm_2[6][0]

lstm_2[7][0]

lstm_2[8][0]

____________________________________________________________________________________________________

concatenate_2 (Concatenate) (None, 30, 128) 0 bidirectional_1[0][0]

repeat_vector_2[0][0]

bidirectional_1[0][0]

repeat_vector_2[1][0]

bidirectional_1[0][0]

repeat_vector_2[2][0]

bidirectional_1[0][0]

repeat_vector_2[3][0]

bidirectional_1[0][0]

repeat_vector_2[4][0]

bidirectional_1[0][0]

repeat_vector_2[5][0]

bidirectional_1[0][0]

repeat_vector_2[6][0]

bidirectional_1[0][0]

repeat_vector_2[7][0]

bidirectional_1[0][0]

repeat_vector_2[8][0]

bidirectional_1[0][0]

repeat_vector_2[9][0]

____________________________________________________________________________________________________

dense_4 (Dense) (None, 30, 10) 1290 concatenate_2[0][0]

concatenate_2[1][0]

concatenate_2[2][0]

concatenate_2[3][0]

concatenate_2[4][0]

concatenate_2[5][0]

concatenate_2[6][0]

concatenate_2[7][0]

concatenate_2[8][0]

concatenate_2[9][0]

____________________________________________________________________________________________________

dense_5 (Dense) (None, 30, 1) 11 dense_4[0][0]

dense_4[1][0]

dense_4[2][0]

dense_4[3][0]

dense_4[4][0]

dense_4[5][0]

dense_4[6][0]

dense_4[7][0]

dense_4[8][0]

dense_4[9][0]

____________________________________________________________________________________________________

attention_weights (Activation) (None, 30, 1) 0 dense_5[0][0]

dense_5[1][0]

dense_5[2][0]

dense_5[3][0]

dense_5[4][0]

dense_5[5][0]

dense_5[6][0]

dense_5[7][0]

dense_5[8][0]

dense_5[9][0]

____________________________________________________________________________________________________

dot_2 (Dot) (None, 1, 64) 0 attention_weights[0][0]

bidirectional_1[0][0]

attention_weights[1][0]

bidirectional_1[0][0]

attention_weights[2][0]

bidirectional_1[0][0]

attention_weights[3][0]

bidirectional_1[0][0]

attention_weights[4][0]

bidirectional_1[0][0]

attention_weights[5][0]

bidirectional_1[0][0]

attention_weights[6][0]

bidirectional_1[0][0]

attention_weights[7][0]

bidirectional_1[0][0]

attention_weights[8][0]

bidirectional_1[0][0]

attention_weights[9][0]

bidirectional_1[0][0]

____________________________________________________________________________________________________

c0 (InputLayer) (None, 64) 0

____________________________________________________________________________________________________

lstm_2 (LSTM) [(None, 64), (None, 6 33024 dot_2[0][0]

s0[0][0]

c0[0][0]

dot_2[1][0]

lstm_2[0][0]

lstm_2[0][2]

dot_2[2][0]

lstm_2[1][0]

lstm_2[1][2]

dot_2[3][0]

lstm_2[2][0]

lstm_2[2][2]

dot_2[4][0]

lstm_2[3][0]

lstm_2[3][2]

dot_2[5][0]

lstm_2[4][0]

lstm_2[4][2]

dot_2[6][0]

lstm_2[5][0]

lstm_2[5][2]

dot_2[7][0]

lstm_2[6][0]

lstm_2[6][2]

dot_2[8][0]

lstm_2[7][0]

lstm_2[7][2]

dot_2[9][0]

lstm_2[8][0]

lstm_2[8][2]

____________________________________________________________________________________________________

dense_6 (Dense) (None, 11) 715 lstm_2[0][0]

lstm_2[1][0]

lstm_2[2][0]

lstm_2[3][0]

lstm_2[4][0]

lstm_2[5][0]

lstm_2[6][0]

lstm_2[7][0]

lstm_2[8][0]

lstm_2[9][0]

====================================================================================================

Total params: 52,960

Trainable params: 52,960

Non-trainable params: 0

____________________________________________________________________________________________________

Navigate through the output of model.summary() above. You can see that the layer named attention_weights outputs the alphas of shape (m, 30, 1) before dot_2 computes the context vector for every time step $t = 0, \ldots, T_y-1$. Let's get the attention weights from this layer.

The function attention_map() pulls out the attention values from your model and plots them.

attention_map = plot_attention_map(model, human_vocab, inv_machine_vocab, "Tuesday 09 Oct 1993", num = 7, n_s = 64);

<matplotlib.figure.Figure at 0x7f43d3d2deb8>

On the generated plot you can observe the values of the attention weights for each character of the predicted output. Examine this plot and check that the places where the network is paying attention makes sense to you.

In the date translation application, you will observe that most of the time attention helps predict the year, and doesn't have much impact on predicting the day or month.

Congratulations!¶

You have come to the end of this assignment

Here's what you should remember¶

- Machine translation models can be used to map from one sequence to another. They are useful not just for translating human languages (like French->English) but also for tasks like date format translation.

- An attention mechanism allows a network to focus on the most relevant parts of the input when producing a specific part of the output.

- A network using an attention mechanism can translate from inputs of length $T_x$ to outputs of length $T_y$, where $T_x$ and $T_y$ can be different.

- You can visualize attention weights $\alpha^{\langle t,t' \rangle}$ to see what the network is paying attention to while generating each output.

Congratulations on finishing this assignment! You are now able to implement an attention model and use it to learn complex mappings from one sequence to another.